White Label AI Website Builder: What Agencies Should Look For (2026)

You do not need another shiny tool that promises “one click websites” and then leaves your team cleaning up the mess for weeks.

If you are an agency, a white label AI website builder only matters if it actually compresses your delivery cycle without breaking brand control, SEO, and client governance.

This guide is built for commercial investigation. You are evaluating platforms. You need a clear checklist, the right vendor questions, and a practical way to score options.

We will cover what “white label AI website builder” really means, which AI workflows are worth paying for, the must-have capabilities beyond hype, SEO and performance requirements, multi-client governance, pricing models, and a 10 minute demo script you can use to compare vendors.

Internal links you may want as you read:

A lot of vendors use the term “white label” loosely. Some mean “you can add your logo to a client report.” Others mean “your clients never see the vendor name anywhere.” Those are very different offers.

A true white label AI website builder is not just a site builder with AI prompts. It is an agency delivery system that you can brand as your own.

At minimum, a white label setup should let you control:

portal.youragency.com.Then there are “agency-grade” features that separate a real white label platform from a reskinned tool:

White label is not a logo swap. It is an operating model. If the platform cannot support your internal workflow and client approvals, it will create more work than it saves.

There is also a major difference between AI-assisted and AI-autonomous.

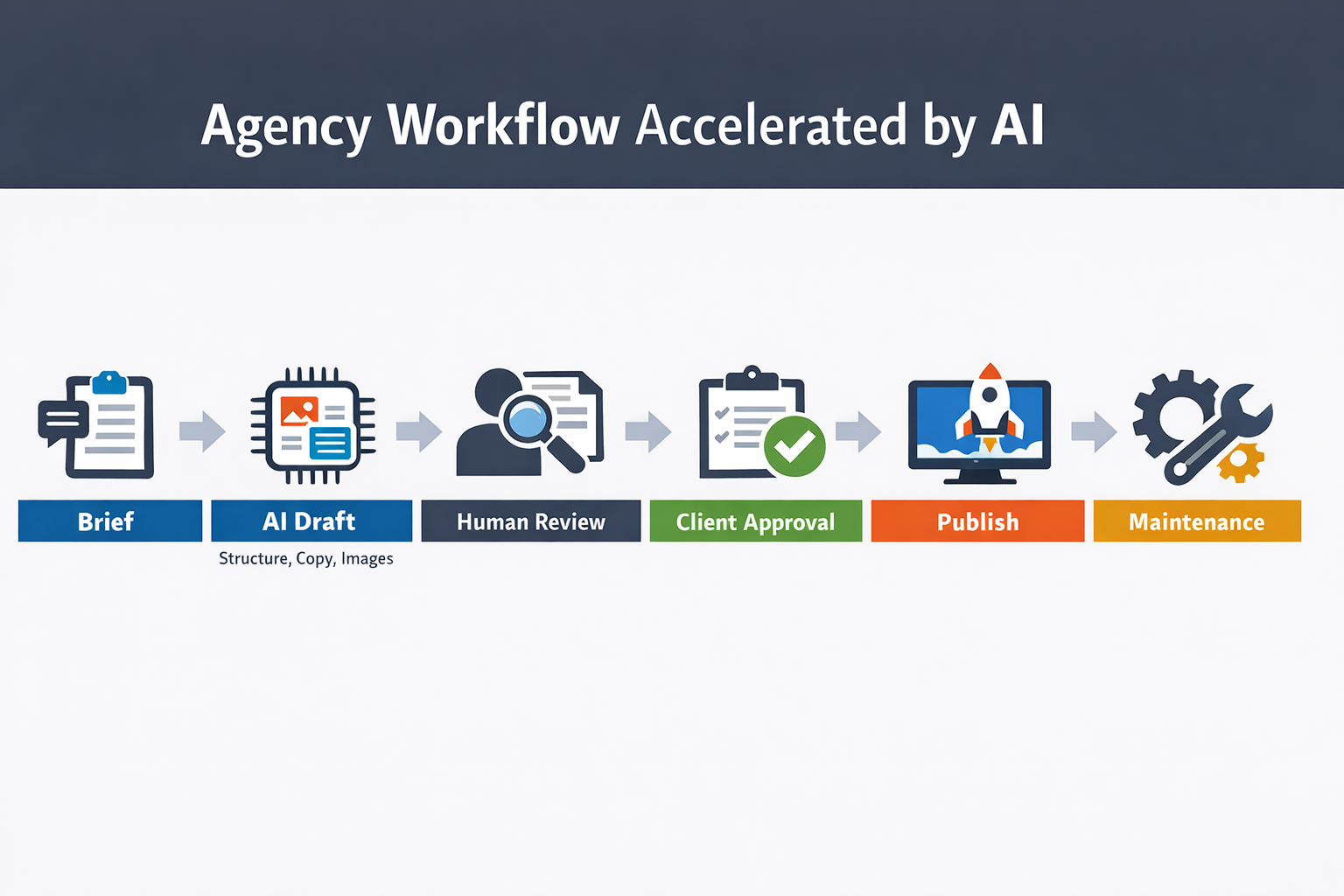

In 2026, the most reliable agencies operate in an AI-assisted model. They use AI to compress time to first draft and reduce revision cycles, while keeping editorial control.

If a vendor sells “fully autonomous websites,” ask how they prevent generic copy, wrong claims, legal issues, accessibility problems, and performance regressions. If the answer is vague, you have your answer.

When you evaluate a white label AI website builder, focus on measurable workflow improvements. Not “AI features,” but “time saved per site.”

A practical goal for many agencies is to cut the time to a client-ready first draft from days to hours, and to reduce revision cycles by making changes faster and safer.

The best AI acceleration happens before a designer opens Figma or a developer touches a theme.

Look for AI that can:

If you want a simple benchmark: for a standard 5 page business site, AI should deliver a coherent first draft that your team can polish in a single working session.

Client revisions are where time goes to die.

A white label AI website builder should make revisions faster through:

The goal is to handle the most common revision types quickly:

AI can help, but only if it is constrained properly.

A strong platform also helps with “boring” agency work, which is where agencies earn recurring revenue:

If you do this manually across 50+ client sites, you already know how expensive it is. AI plus governance can turn this into a repeatable process.

If maintenance is part of your offer, evaluate whether the platform supports safe updates and governance, because ongoing work increases risk.

Many platforms claim AI. Few deliver agency-grade control.

To make this concrete, you want three layers of control: (1) brand constraints, (2) content constraints, and (3) safe editing and release controls. When any of these are missing, AI output becomes unpredictable, and unpredictability is what kills agency margins.

Here is what to look for if you want predictable results.

Without brand controls, AI output trends toward generic.

Strong platforms let you define:

This matters because agencies sell consistency.

If your agency builds 20 sites a month, you need the AI to output drafts that match your standards. Otherwise you are paying for text that your team has to rewrite from scratch.

A quick test: ask the vendor to generate two different sites for two different industries and show how the controls prevent both from sounding the same.

The ability to edit and safely revert is non-negotiable.

Ask for:

In multi-client environments, mistakes happen. Rollback turns a mistake into a five minute fix instead of a two hour rebuild.

AI should make it faster to ship, but it also increases the speed of mistakes. Versioning and rollback protect your margins.

If you serve multilingual markets, you need more than translation.

Look for:

Also ask how the platform handles duplicate content risks and hreflang, because multilingual SEO is not a checkbox.

AI can produce fast drafts. SEO and performance determine whether those drafts can rank and convert.

You are evaluating a platform. That means you need to think like an SEO lead and a technical lead.

A site can look beautiful and still be a technical SEO nightmare.

Minimum requirements:

Agency tip: ask the vendor to walk through editing these settings live on a real page. If the SEO controls are hidden behind “advanced” tabs or cannot be customized per page, you will feel it during launch week.

If the vendor cannot explain how their HTML is structured, ask for an example page source.

If you want your AI-generated sites to rank, build a simple editorial rule: every key page needs at least one piece of proof that only that business can claim. A real number, a real case study, a real guarantee policy, a real team story. That is what makes the content “helpful,” and it is also what makes it convert.

For SEO guidance on quality and helpful content, reference Google Search Central: Google Search Central documentation.

Here is the business reason to care. On web.dev, Rakuten 24 reported that optimizing Core Web Vitals improved conversion rate by 33.13% and revenue per visitor by 53.37% in an A/B test. That is not “nice to have,” that is a margin multiplier for your clients and a differentiator for your agency. See the case study: Rakuten 24 Core Web Vitals case study.

Your client does not care what generated the site. They care that it loads fast.

Ask how the platform handles:

A reliable baseline is that the platform should be able to achieve strong Core Web Vitals with sane content. If they cannot show performance results, you will spend your time fighting the system.

For performance best practices, web.dev is a solid reference: web.dev performance.

AI raises a new risk: “content that looks fine but says nothing.”

To protect quality, you need:

Google’s guidance makes it clear that quality matters more than how content is produced. What matters is whether it is helpful and original.

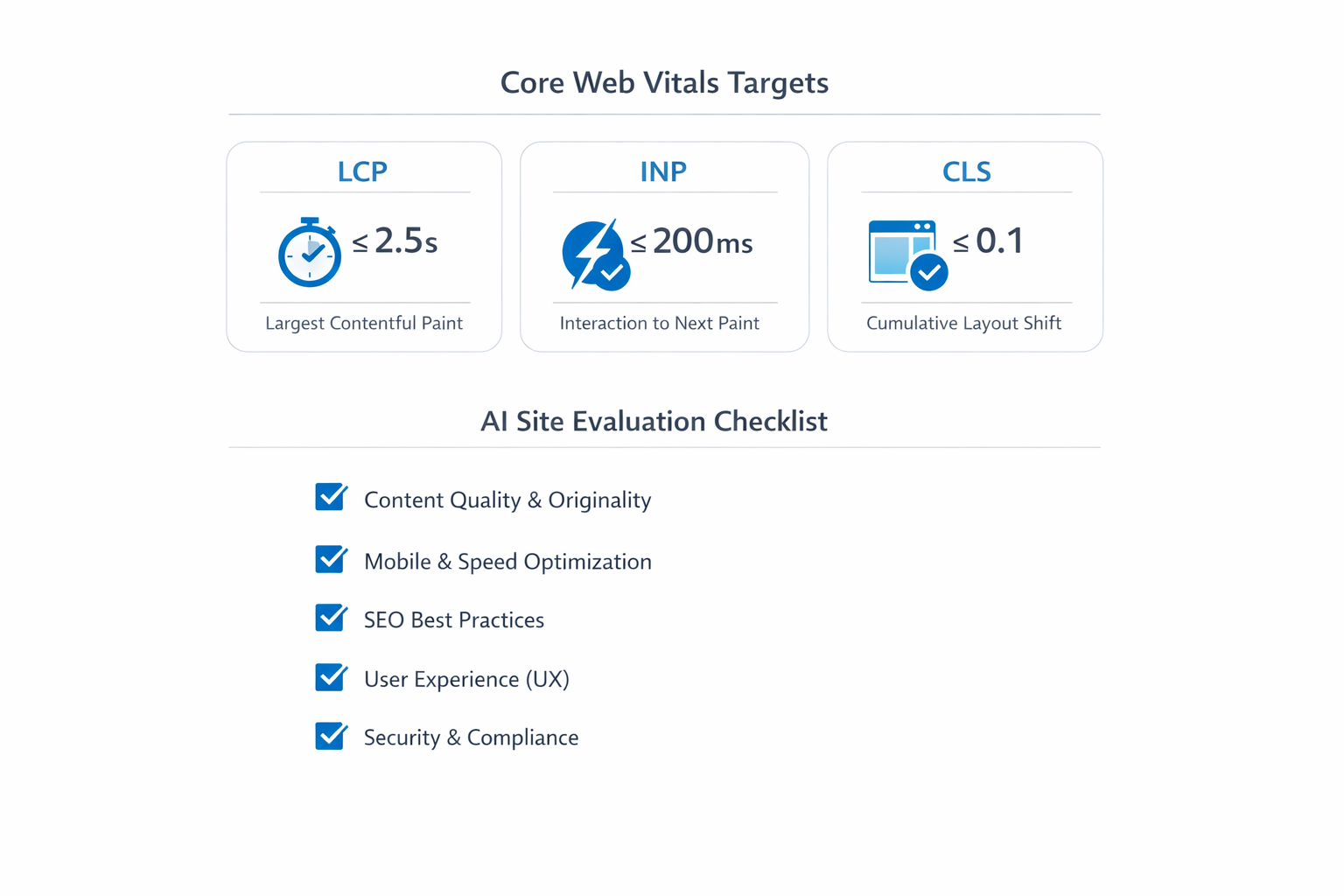

In practical terms, you should build an internal QA checklist:

This is the part many “AI builders” ignore.

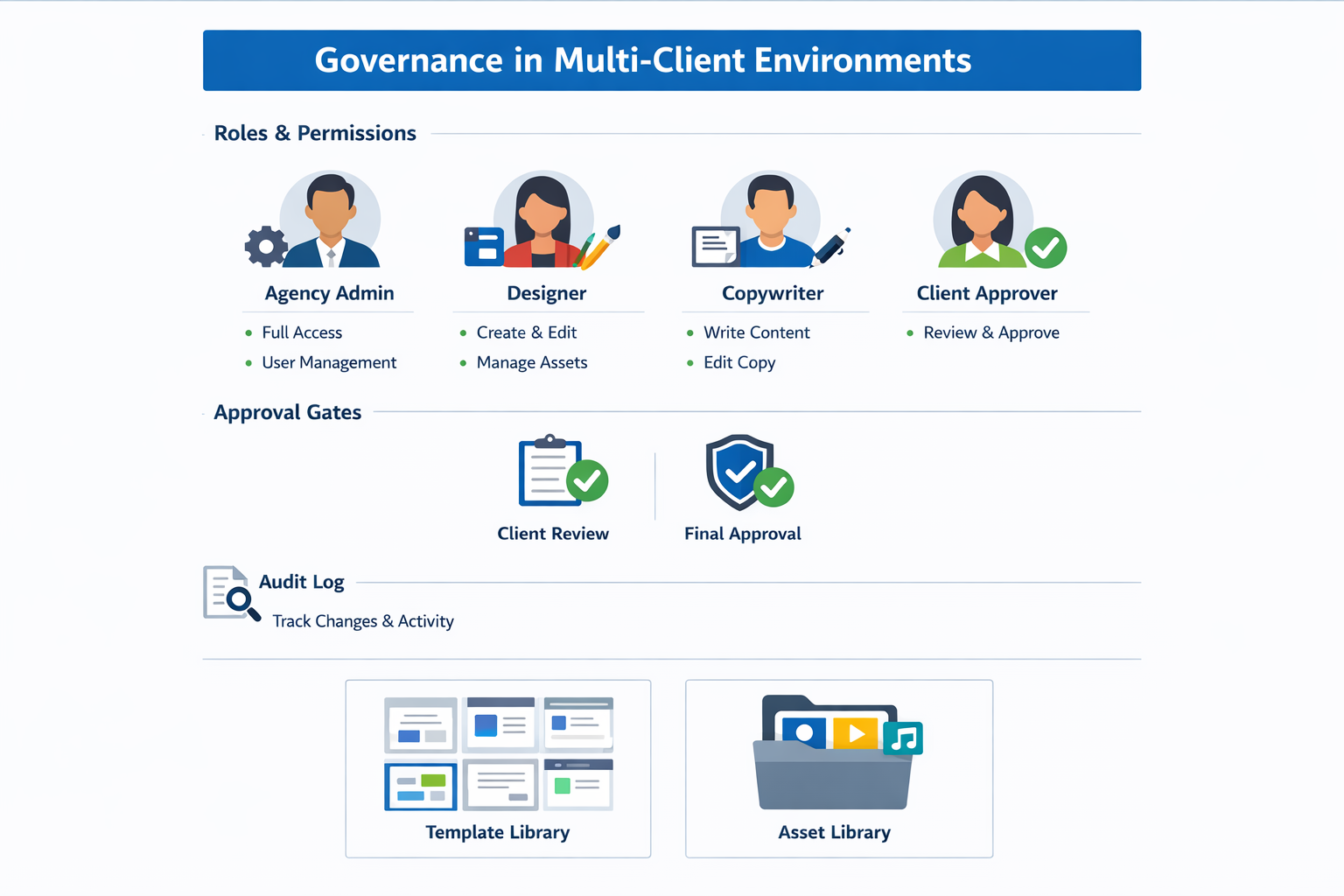

As an agency, you are not building one site. You are building and managing dozens or hundreds.

Governance is what keeps your team efficient and keeps clients from breaking things.

Your platform should support at least:

For approvals, look for:

If you are in regulated industries or you support enterprise clients, audit logs become critical.

Security also matters. You do not need a full audit to shortlist a platform, but you should ask about basics, and you can reference OWASP principles for web application security: OWASP Top 10.

Agencies win by reuse.

A strong white label AI website builder should let you maintain:

If every project starts from scratch, you are not scaling.

Most agencies do not lose time on the first draft. They lose time on the second and third round of edits. That is why the editor experience matters more than the AI headline feature.

Use these real-world scenarios to test editing:

If your team cannot do these quickly, you will be stuck in manual layout fixes.

Builders usually fall into two models:

For agencies, component-based is usually better, as long as you can create and manage your own component library. The goal is to make “the right thing” the easiest thing.

Even if you love a platform, you should understand lock-in risk. Ask:

A vendor does not need to support full HTML export to be a good choice, but they should have a clear story for client ownership, backups, and continuity.

AI can speed up drafts, but it can also ship junk if the platform does not enforce technical hygiene.

If you want a simple north star, Google recommends site owners achieve good Core Web Vitals. The current Core Web Vitals targets are LCP within 2.5 seconds, INP under 200 milliseconds, and CLS at 0.1 or less. See Understanding Core Web Vitals and Google search results and the Web Vitals overview.

When you evaluate a white label AI website builder, your team should be able to set and edit, without hacks:

If the platform says “the AI handles SEO,” push back.

You are not buying “SEO.” You are buying control.

The fastest way to lose trust is to promise SEO improvements while the platform locks basic metadata, URL control, or redirects. Your clients will blame you, not the vendor.

AI-generated pages can be lightweight, but only if the builder keeps the output simple and fast.

On your demo call, ask the vendor to run PageSpeed Insights on a sample site and explain what is happening. Then confirm you can influence the drivers:

If you want an agency-friendly SOP, use this quick check:

White label does not just mean branding, it also means you are responsible for client risk.

At minimum, your platform should support:

Use the OWASP Top 10 as a baseline for the kinds of risks you are trying to prevent. See OWASP Top Ten Web Application Security Risks.

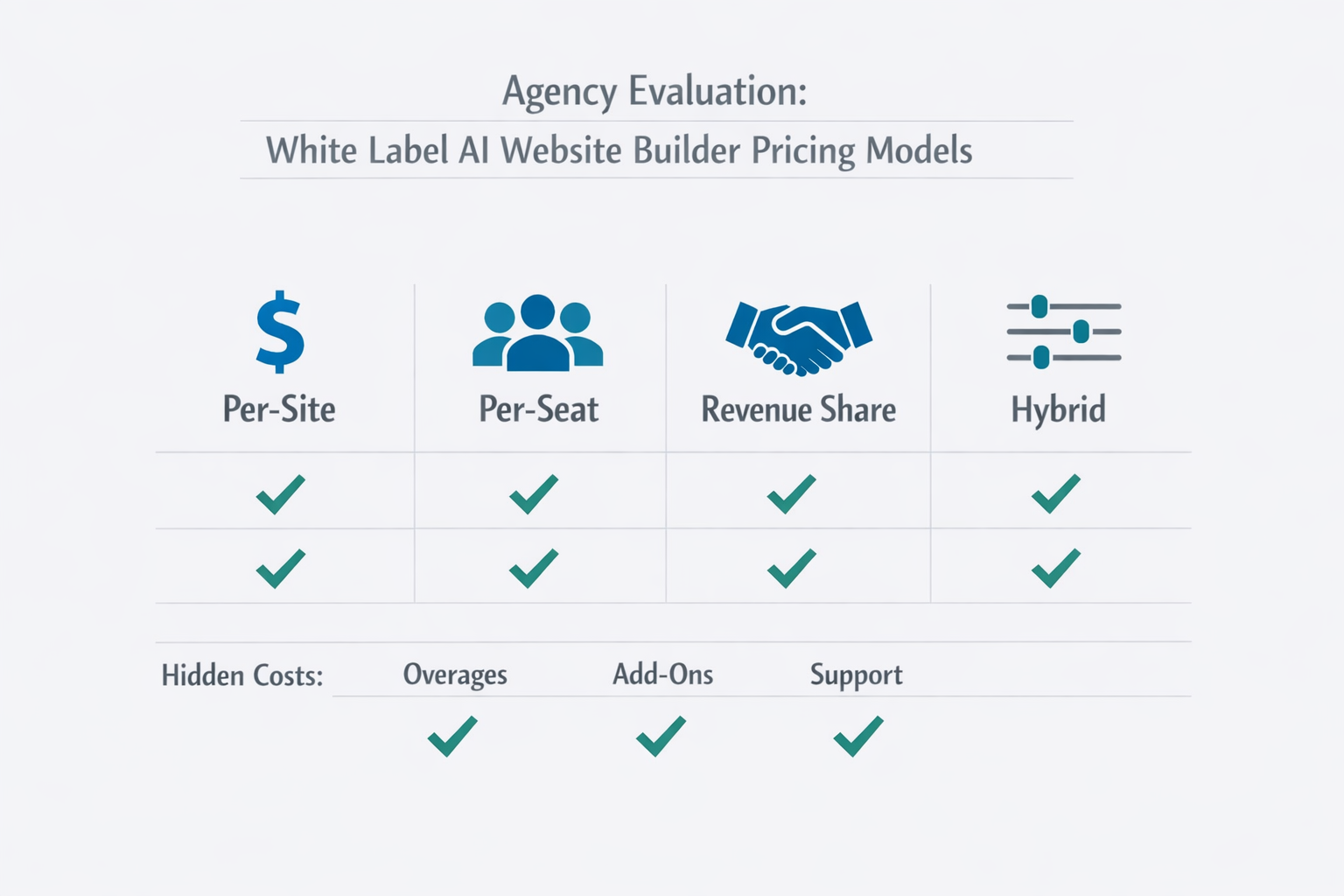

Agency takeaway: if your vendor pricing model makes your costs unpredictable, your margins will be unpredictable too. Before you commit, write down (1) your monthly fixed cost, (2) your cost per additional client, (3) your worst-case overage scenario, and (4) your exit plan.

Pricing is where agencies get burned.

Vendors use different models:

Your job is to understand your cost structure so you can price profitably.

Ask these questions early:

A realistic planning range for many agencies is 20 to 80 “meaningful generations” per standard site when you include first draft plus revisions. If a vendor bills per generation, you need to translate that into a cost per client site and a cost per month.

A simple way to model it:

Your numbers will vary, but the point is that “credits” are not abstract. They are a line item in your margin.

Hidden pricing complexity is a risk.

A practical way to model your cost is to estimate:

If the vendor cannot provide a clear billing example, expect surprise invoices.

If you sell websites, you are not selling “pages.” You are selling outcomes.

Here is a simple packaging model many agencies use. Notice that AI is not mentioned in the package name. Clients buy clarity, speed, and results, not tooling.

You can also add explicit deliverables that protect scope:

number of revision rounds

timeline for approvals

content inputs required from the client

what is included in SEO setup

Starter: 1 language, 5 pages, basic SEO, launch in 7 to 10 days.

Growth: strategy workshop, conversion copy, blog setup, tracking, launch in 2 to 4 weeks.

Premium: custom components, advanced SEO, multi-location pages, launch plus A/B testing.

Maintenance: monthly updates, new pages, reporting, ongoing optimization.

AI can increase your margins by reducing labor hours. But you should not race to the bottom.

Instead, use AI to deliver faster, with higher consistency.

A website builder does not live alone. Agencies need it to connect to the rest of the stack.

At minimum, you should be able to add and manage Google Analytics and conversion tracking cleanly, ideally per site and per environment. Ask how the platform handles:

Lead capture is where the money is. Test form integrations for reliability. If you cannot connect forms to a CRM or an automation tool, your client will blame you, not the vendor.

For service businesses, bookings matter. For some niches, payments matter. You want a platform that supports the embed patterns you need, and does not collapse performance with heavy scripts.

Ask whether you can maintain a shared asset library and push approved blocks across sites. That is how you keep brand consistency at scale.

Listicles will not help you pick the right platform. A demo script will.

Below are 15 questions to ask sales, and a 10 minute flow you can use to compare vendors.

Use the same flow for every vendor. That is how you compare fairly.

Minute 0 to 2: Create a project from a brief

Minute 2 to 4: Control the brand

Minute 4 to 6: Edit safely

Minute 6 to 8: SEO and performance check

Minute 8 to 10: Governance

If a vendor cannot complete this in a live demo, they are not ready for agency scale.

Here is a realistic example of how this plays out.

You are evaluating two vendors for a 30 client rollout. In the demo, Vendor A generates a decent draft, but their “white label” stops at a login logo. Client invite emails come from the vendor domain, there is no custom portal domain, and there is no staging approval step. When you ask for rollback, the rep says, “You can manually undo.”

On the scorecard, they score 2/5 on White label, 2/5 on Editing safety, and 2/5 on Governance. You also notice pricing is seat-based plus AI credits, and the rep cannot show a concrete monthly cost example for your team size. That is another 2/5 for Pricing clarity.

Vendor B is not perfect, but they show a branded portal on your domain, role-based approvals, version history with restore, and page-level regeneration. They can explain exactly what counts as a generation and give you a sample invoice for 20 sites.

You do not need a “better AI.” You need a safer operating system. The scorecard gives you permission to walk away from Vendor A, even if their marketing demo looked slick.

Here is a practical way to score options. Use a 1 to 5 scale.

| Category | What to score | 1 (weak) | 3 (ok) | 5 (excellent) |

|---|---|---|---|---|

| White label | Branding, domain, portal | Logo only | Portal branded | Full experience white labeled |

| AI draft quality | Structure and copy quality | Generic | Good baseline | Strong, niche-aware |

| Control | Style, voice, constraints | Few controls | Some controls | Deep controls + lockable |

| Editing safety | Versioning, rollback | None | Basic | Robust + diffing |

| SEO | Metadata, schema, URLs | Limited | Standard | Advanced + clean HTML |

| Performance | Images, CDN, scripts | Slow | Acceptable | CWV-focused |

| Governance | Roles, approvals, logs | Minimal | Adequate | Enterprise-grade |

| Pricing clarity | Billing model transparency | Confusing | Understandable | Predictable, simple |

Print this and use it. The best platform is the one that scores high on control, governance, and predictable costs.

If your goal is to deliver more sites without hiring a bigger team, you need a platform designed for agencies.

With lindo.ai, agencies can build AI-powered websites for clients and keep delivery consistent across projects.

What to evaluate in a lindo.ai demo:

If you are comparing multiple tools, use the same brief and the same success criteria every time. That is how you avoid being swayed by a polished interface. The questions in this guide apply to any vendor, including us.

If you want to explore options for your agency, start here: lindo.ai.

Some platforms look great in a marketing demo and fail in real delivery.

Here are red flags I would treat as deal-breakers for most agencies.

If you can add your logo but the editor still shows the vendor name, client emails come from the vendor domain, or the portal URL cannot be branded, then you are not white labeling. You are reselling.

That might still be acceptable for a small offer, but it is not what most agencies mean when they say “white label.”

If the AI cannot follow rules like “never mention we are the vendor,” “never claim guarantees,” “use this exact CTA,” and “avoid these words,” you will spend your time rewriting.

Ask the vendor to show how constraints are set and enforced. If the answer is “our AI learns over time,” that is not a control system.

Originality is not just “no plagiarism.” It is specificity. If the AI always outputs generic claims and generic feature lists, you will not rank and you will not convert.

A good platform helps you inject unique proof like case studies, numbers, differentiators, local details, and real service packages, and it keeps those elements consistent across the site.

If you cannot control redirects, canonicals, metadata, and URL structure, you will eventually hit a wall. Clients will ask: can we change this URL, why is this page not indexed, can we add FAQ schema.

If your answer has to be “we cannot because the platform does not allow it,” you will lose trust.

If changes go directly to production with no review flow, you are one accidental edit away from a public mistake. Staging and approvals are also how you keep clients from breaking layouts.

Usage-based AI billing can be fair, but only if it is transparent. If you cannot reliably estimate monthly costs per client, you cannot price your own packages confidently.

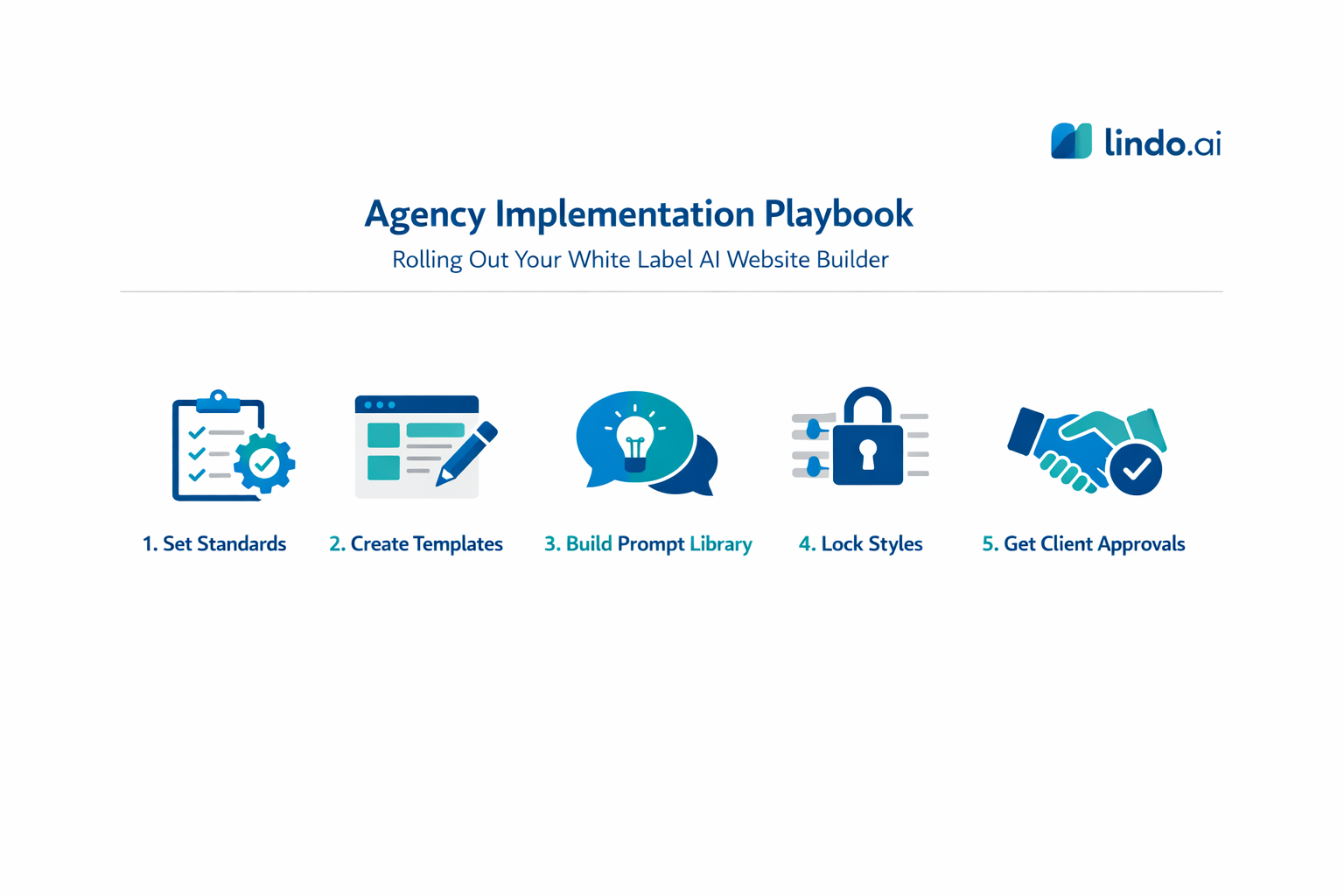

Buying a platform is the easy part. Operationalizing it is where most teams stumble.

Write down what “good” looks like: a minimum page set, minimum conversion elements, minimum SEO setup, and performance expectations. This becomes your QA checklist.

Pick the niches you sell the most and create a standard page set, reusable blocks, a voice and style preset, and an asset checklist. The second site in a niche should take less time than the first.

A prompt library is a set of repeatable instructions aligned with your templates. Example prompts: generate 3 premium hero options mentioning {service} and {city} and avoiding hype; write a 6-step process section with each step under 25 words; draft a 6-question FAQ including pricing, timeline, and client inputs.

Lock typography scale, spacing system, component styles, and brand colors. Let the team customize inside a safe framework.

Set expectations that clients comment and approve at milestones, and the agency controls edits. This reduces revision churn.

Must-have controls checklist: lock the style system, enforce roles and permissions, require staging approvals, keep version history, and define a “proof rule” for every key page (a real number, case study, or guarantee).

The best agencies treat AI as a process upgrade, not a marketing gimmick. The value is in standardization and speed, not in “the AI wrote it.”

They can be, if the platform outputs clean HTML and gives you control over metadata, headings, URLs, and performance. The bigger issue is content quality. AI drafts must be reviewed so they become specific, original, and useful, not generic.

Real white label means your clients do not see the vendor brand across the portal, editor, emails, and domain. It also means the platform supports agency operations: templates, multi-client governance, roles, and approvals.

Do not price based on “AI did it.” Price based on the outcome and the speed you can deliver. Use vendor costs as your margin floor, then package strategy, copy quality, SEO, and maintenance as the real value.

Brand voice controls, reusable templates, and constrained regeneration are the big ones. You want AI that generates within your framework, plus editing tools and approvals that make quality easy to enforce.